Emerging Signals

What the wave looks like

before it arrives.

A curated feed of consequential thinking at the intersection of AI, organizational futures, and second and third order change.

A Grander Vision for AI: The Case for Public AI Infrastructure

IFTF Executive Director Marina Gorbis argues that AI is becoming critical infrastructure on par with electricity and water, yet is controlled by a small number of technology companies whose profits flow primarily to shareholders rather than workers or the public. The piece calls for public AI alternatives — regulated utilities, community-managed platforms, or government-owned services — to ensure equitable access, accountability, and democratic oversight. It highlights nascent efforts including the NAIRR pilot, the Journalism Cloud Alliance, and IFTF's own community AI labs as early proof points.

View Signal →

Opus 4.6 Builds Playable CLI Versions of Complex Games in a Single Run

METR researcher Nikola Jurkovic tasked Anthropic's Opus 4.6 with reimplementing Slay the Spire and Balatro as CLI games using a simple ReAct scaffold with internet access and 60 million tokens. The model produced mostly playable versions of both games in single runs, with recognizable core mechanics intact despite missing features and edge-case bugs. The researcher estimated these tasks would take an experienced software engineer several months to complete.

View Signal →

The Adolescence of Technology

Anthropic's CEO frames the current AI moment as a 'technological adolescence' — a rite of passage where the danger isn't AI failing, but AI succeeding before our social, political, and safety systems can absorb what that success produces. The essay maps four risk categories: AI autonomy failures, misuse for mass destruction, concentration of power, and labor disruption. His through-line: doomerism and uncritical optimism are equally dangerous, and as of 2026 we are considerably closer to real risk than we were in 2023.

View Signal →

Algorithms Will Reshape Our Reading and Writing Practices

IFTF forecaster Rebecca Shamash argues that AI tools are rapidly reshaping not just how people write, but how they think — shifting knowledge workers from analysis and synthesis toward verification and task stewardship. With 86% of higher education students already using AI and ChatGPT reaching 800M weekly users, AI-mediated reading and writing is becoming normalized against a backdrop of declining reading participation and falling student literacy scores. The deeper risk, she argues, is not illiteracy but cognitive passivity: outsourcing the acts of wrestling with text and forming arguments to machines that optimize for coherence, not truth.

View Signal →

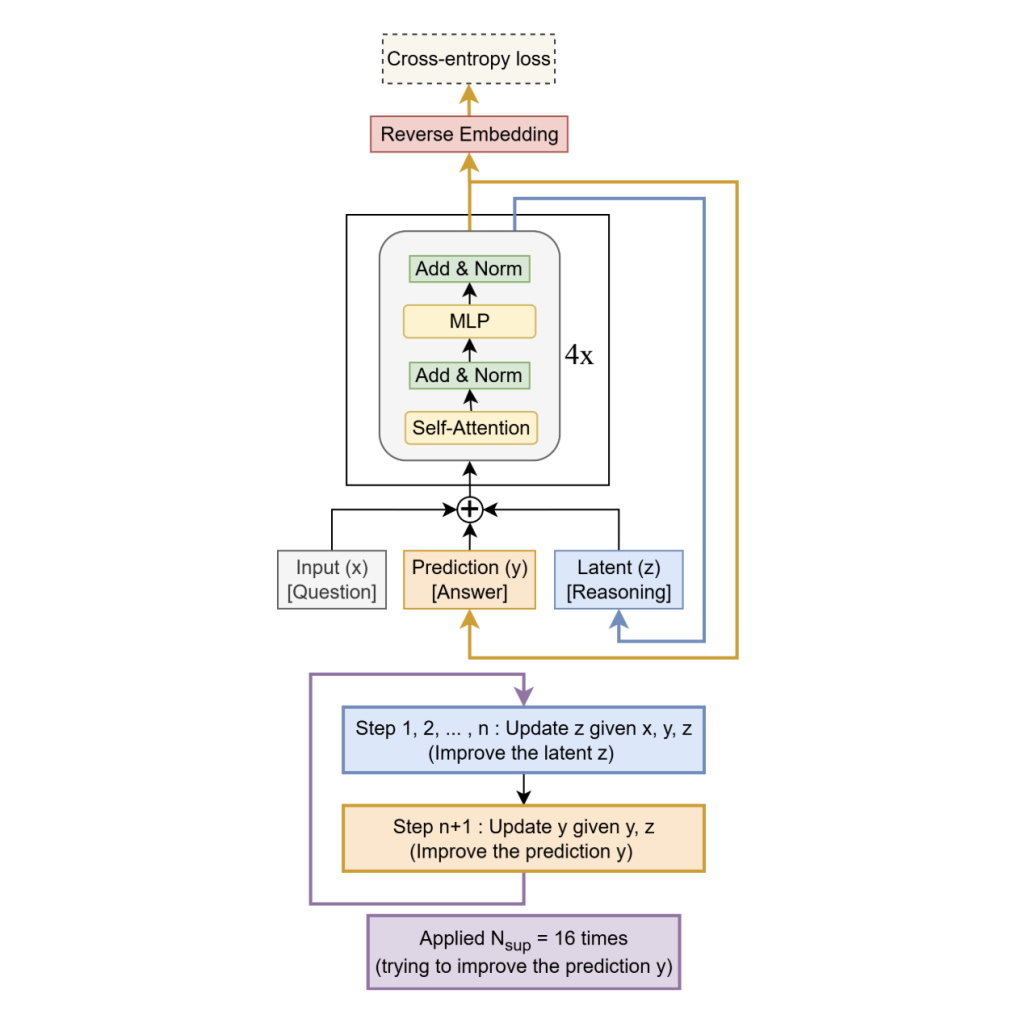

Samsung's Tiny AI Model That Beats Giants at Reasoning

Samsung's SAIT AI Lab built the Tiny Recursive Model (TRM) — 7 million parameters, 3.2MB, trained for under $500 — that outperforms systems 10,000x its size on ARC-AGI reasoning benchmarks, beating DeepSeek-R1, Gemini 2.5 Pro, and o3-mini. Rather than scaling up, TRM loops a single two-layer network over its own output up to 16 times, iteratively refining answers. It runs on a Raspberry Pi. No cloud required.

View Signal →

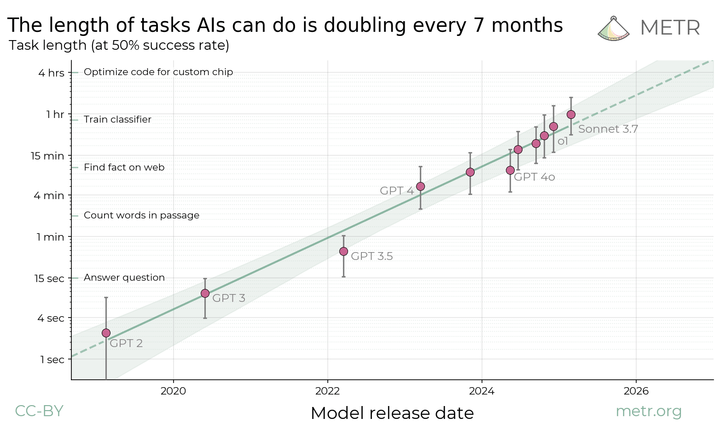

Measuring AI Ability to Complete Long Tasks

METR researchers propose measuring AI capability by the length of tasks models can reliably complete — not by benchmark scores. Their data shows frontier models now handle tasks taking humans several minutes, with that horizon doubling roughly every seven months. If the trend holds, autonomous agents could manage month-long projects within the next decade.

View Signal →