Measuring AI Ability to Complete Long Tasks

Summary

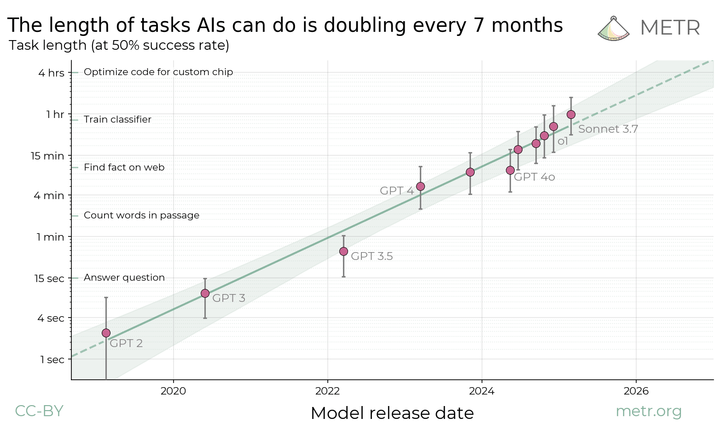

METR researchers propose measuring AI capability by the length of tasks models can reliably complete — not by benchmark scores. Their data shows frontier models now handle tasks taking humans several minutes, with that horizon doubling roughly every seven months. If the trend holds, autonomous agents could manage month-long projects within the next decade.

Read Original Article →Related Signals

Samsung's Tiny AI Model That Beats Giants at Reasoning

The Adolescence of Technology

Opus 4.6 Builds Playable CLI Versions of Complex Games in a Single Run

A Grander Vision for AI: The Case for Public AI Infrastructure

Algorithms Will Reshape Our Reading and Writing Practices

Signal Graph

Second Order

As AI task horizons extend from minutes to hours to days, the nature of human oversight fundamentally shifts. Managers won't be reviewing outputs — they'll be setting objectives and checking in at milestones. The skills that matter become goal specification, constraint setting, and exception handling rather than execution. Organizations that don't start building those muscles now will find themselves unable to direct the systems they've deployed.

Third Order

A seven-month doubling cycle means capability expansion is moving faster than institutional adaptation. By the time organizations have policies for AI handling hour-long tasks, AI will already be handling day-long tasks. The third order consequence is a permanent state of governance lag — where the rules always describe what AI could do last year, not what it's doing now.